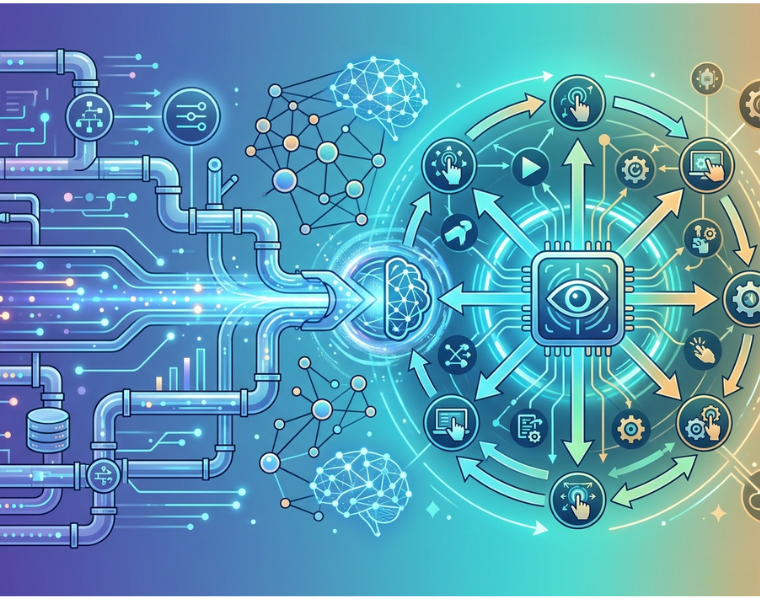

Your architecture processes terabytes of data every day. Pipelines run on schedule. ETL jobs succeed. Dashboards refresh without failure. Yet the system stops insight. It does not act.

This is the architectural gap modern enterprises face.

A traditional Data Pipeline is designed for data movement and transformation. An AI agent system is designed for decision making and autonomous execution. The shift is not incremental. It is architectural. Organizations that fail to make this transition will continue to depend on manual orchestration. Those that succeed will operate on self-optimizing systems.

This guide outlines a precise, technical pathway to move from Data Pipeline infrastructure to production-grade AI agents.

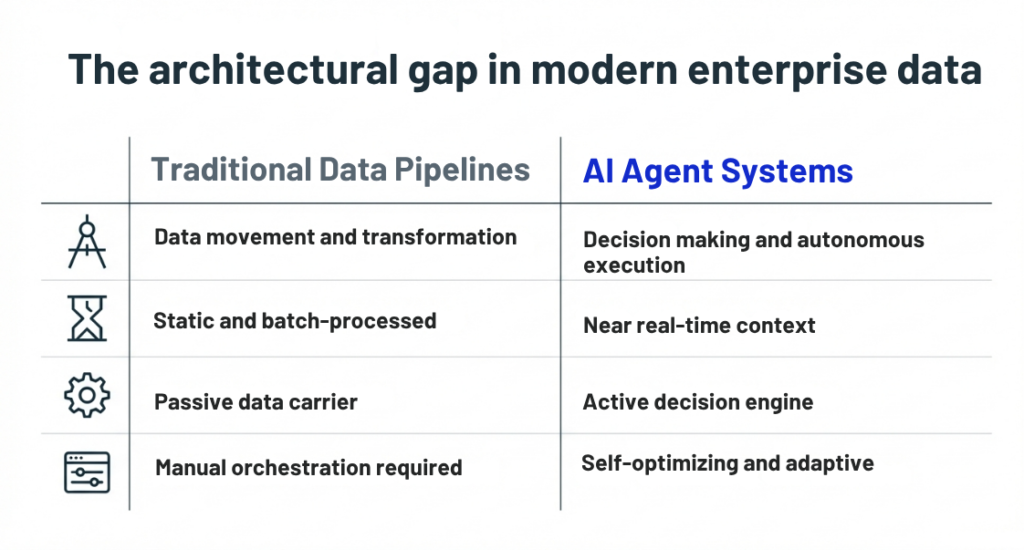

Step 1: Audit and Stabilize Your Data Pipeline

AI agents require near real-time context. Batch pipelines introduce unacceptable delays.

Modernize your Data Pipeline by adopting:

- Event-driven architectures using streaming platforms such as Kafka

- Change Data Capture for real-time synchronization

- Micro-batch or stream processing instead of static ETL jobs

- Data contracts to enforce schema consistency across producers and consumers

Technical outcomes:

- Reduced data staleness

- Improved system responsiveness

- Better alignment with agent execution cycles

Without this shift, AI agents will operate on outdated state representations.

Step 2: Build a Semantic and Contextual Data Layer

AI agents do not operate directly on raw tables. They require a structured context.

Introduce a semantic layer that enables machine reasoning:

- Knowledge graphs to model relationships between entities

- Vector embeddings for unstructured data such as text and logs

- Feature stores to serve consistent, versioned inputs to models

- Ontologies to define domain-specific meanings

Key capabilities unlocked:

- Context-aware querying

- Improved retrieval accuracy for agent reasoning

- Cross-system data interoperability

Step 3: Develop Decision Intelligence and Agent-Ready Models

Moving beyond analytics requires models that support decision logic.

Focus on:

- Supervised learning models for classification and prediction

- Reinforcement learning for adaptive decision policies

- Graph-based models for relationship inference

- Retrieval-Augmented Generation for contextual reasoning over large datasets

Implementation details:

- Expose models via APIs for agent consumption

- Maintain version control and monitoring for model drift

- Use offline and online evaluation pipelines

Use cases:

- Predictive maintenance with automated response triggers

- Demand forecasting integrated with supply adjustments

At this stage, AI consulting services help align model outputs with operational KPIs.

Step 4: Implement Agent Orchestration and Tooling Layers

AI agents require an execution framework that connects reasoning with action.

Core components:

- Orchestration frameworks to manage multi-step workflows

- Tool integration layers for API calls and system interaction

- Memory modules for maintaining state across tasks

- Prompt engineering and policy constraints for controlled behavior

Deployment strategy:

- Start with bounded agents handling specific tasks

- Integrate with enterprise systems such as CRM, ERP, or ticketing platforms

- Implement logging and traceability for every agent action

This transforms the Data Pipeline from a passive data carrier into an active decision engine.

Step 5: Establish Observability, Feedback, and Governance

Production-grade AI agents require continuous monitoring and control.

Implement:

- Observability pipelines for tracking agent decisions and latency

- Feedback loops using user interactions and outcome validation

- Human-in-the-loop checkpoints for high-risk operations

- Policy enforcement for compliance, security, and ethical constraints

Critical metrics:

- Decision accuracy

- Task completion rate

- Latency and throughput

- Drift in model performance

Governance ensures that AI agents remain reliable, auditable, and aligned with business rules.

Summary:

A system that only moves data will always wait for decisions. A system powered by AI agents acts in real time. This transition defines modern architecture. Priorise helps organizations engineer this shift with precision through advanced data consulting services. If you are ready to evolve beyond the Data Pipeline, now is the time to build systems that think, decide, and execute.

Ready to agent-ify? Book a free Priorise audit today at priorise.com/consult. Evolve your Data Pipeline now with expert AI consulting services!